Earlier this month, Anjelica Jade Bastién pointed to Jared Leto’s performance in Suicide Squad—the preparations for which involved watching footage of brutal crimes and sending his fellow cast members used condoms—as evidence that “Hollywood Has Ruined Method Acting.” A reader who’s been a member of the Actors Studio for 15 years is outraged by Leto’s antics and the commentary surrounding it:

The pop understanding of Method Acting is that an actor walks around the set in character, goes home in character, does weird stuff, and ruins the environment for everybody else on set. And that is just NOT what Method Acting is. It’s not Method Acting is using your own experiences to make a role deeply personal, particularly when your character has moments that diverge from your experiences.

If you are playing Catherine the Great and want me to call you “Your Highness” on set, that’s perfectly fine. That’s your process. But if you treat the PAs like serfs, you’re just a jerk.

Or, as another reader puts it:

In order to play a killer, one does not need to go out and kill people. That is not acting; that’s insanity.

In a previous note, my colleague Chris had much more on method acting from other readers and actors. What’s striking to me about these comments—with the distinction they draw between literally experiencing something and using an actor’s art to imitate it—echo a debate from more than a century ago, when acting in movies was something entirely new. Back then, as Annie Nathan Meyer wrote in the January 1914 issue of The Atlantic,

Many good souls to-day—after attending their first performance of the modern highly perfected moving pictures—pronounce the death of the art of acting. … Now I am frankly of the opinion that it is not the art of acting that is in any danger, but that it is rather that a certain tradition of acting is indeed passing away. … The stage in many ways has held curiously aloof from the spirit of its age. … The truth is that the freedom of the theater, its right to mirror life untrammeled and unquestioned, has not been won in the sense that such freedom has been won in the other arts.

She goes on to argue that the theater of her time—when it isn’t prioritizing historical or allegorical subjects over realistic, relatable narratives—is caught up in attempts to make settings and costumes seem real, while representations of character remain grandiose and stilted. In this way, the advent of film provides an opportunity for theater:

Will the drama cease to concern itself with an eye-deep realism and concern itself with the soul-drama in which the cinematograph will scarcely attempt to rival it? … This is my hope. … Hopelessly outclassed in realism, in the apotheosis of the commonplace, by the modern photographic invasion, the drama will—even as painting did before it at the oncoming of the photograph three quarters of a century ago—escape into the realms of a heightened personality and an enriched imagination. … The modern actor must … give us an art so personal, so elusive, that the camera cannot follow him into the new realm at all.

Twenty years later, in his 1936 essay “The Work of Art in the Age of Mechanical Reproduction,” the German critic Walter Benjamin was also skeptical that film could ever capture an actor’s art:

The stage actor identifies himself with the very character of his role. The film actor very often is denied this opportunity. His creation is by no means all of a piece; it is composed of many separate performances. … Let us assume that an actor is supposed to be startled by a knock at the door. If his reaction is not satisfactory, the director can resort to an expedient: when the actor happens to be at the studio again he has a shot fired behind him without his being forwarned of it. The frightened reaction can be shot now and be cut into the screen version. Nothing more strikingly shows that art has left the realm of the “beautiful semblance” which, so far, had been taken to be the only sphere where art could thrive.

Enter method acting, the modern acting technique that promised all the “heightened personality and … enriched imagination” that Meyer had called for in 1914.

Developed by Lee Strasberg, the artistic director of New York City’s Actors Studio from 1951 until his death in 1982, it reached its height in the 1950s and ’60s, when Strasberg trained actors like James Dean, Jane Fonda, Paul Newman, and Marilyn Monroe in his signature technique. As if in response to the discontinuity of filming that Benjamin criticized above, method acting required actors to consider their characters as complete people, with identities and pasts extending beyond the boundaries of a play or a film. It also made acting personal, requiring intense introspection from actors to help them identify with a role. Here’s how the first reader quoted above describes it:

If you’ve never had a child, and your character’s child goes missing, THAT'S where the Method can help. Have you ever felt desperation and loss like that? So you explore, you do affective memory, maybe sub-personality work, maybe create a place where you felt loss, until you hit on the one that works. Then you own it, keep it to yourself, and let it happen on camera.

You can watch Strasberg himself talk about the method in the clip below (the interview starts about 30 seconds in):

For an art that had transformed from public performance into something experienced alone on either side of a camera’s lens—forcing actors to act, in Benjamin’s words, “not for an audience but for a mechanical contrivance”—these private, deeply personal techniques made sense. Method acting, with all the emphasis it placed on emotional depth and subtlety, was perfect for a medium that now allowed long close-ups of a character’s changing face, flashbacks to gradually reveal her past, soundtracks to complement a mood, and more variation in scene and setting than had ever been possible onstage. For all Meyer’s hope that stage actors would surpass the cinema in “soul-drama,” film offered new opportunities for that drama to be fulfilled—and method acting provided a transformative way to do it.

Today, though, as Bastién notes, “going to great lengths to inhabit a character is now as much a marketing tool as it is an actual technique”—and the private transformations that once constituted method acting have been replaced, at least in the popular understanding of the term, with more spectacular stunts. Leto sent his coworkers a dead pig and a live rat in pursuit of the Joker’s mindset; Leonardo DiCaprio slept in an animal carcass for his role in The Revenant. Reading Benjamin’s essay with this in mind, another passage jumped out at me:

Never for a moment does the screen actor cease to be conscious of this fact[:] While facing the camera he knows that ultimately he will face the public, the consumers who constitute the market. This market, where he offers not only his labor but also his whole self, his heart and soul, is beyond his reach. During the shooting he has as little contact with it as any article made in a factory. … The cult of the movie star, fostered by the money of the film industry, preserves not the unique aura of the person but the “spell of the personality,” the phony spell of a commodity.

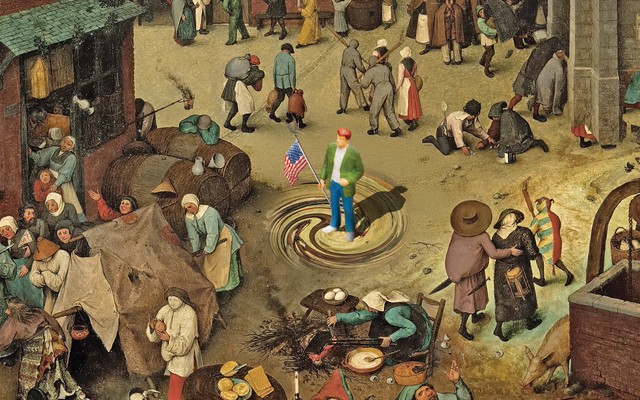

This era in Hollywood, as my colleague David has written, is more factory-like than ever—reliant on blockbusters, repackaging sequel after remake after reboot, and selling stunts like Leto’s and DiCaprio’s as much as it tries to sell films. It’s an industry built on bombast and spectacle, made for a massive public, and it lends itself to phony commodifications of an actor’s work.

Yet meanwhile, we audiences consume acting more privately than ever--on personal laptops and tablets and smartphones, via streaming accounts whose algorithms guess what we like. We chill with Netflix, oh-so-intimately, and spend our weekends binging on serials, privately treating ourselves to hours of indulgence and pleasure and shame. Watching alone, we invest ourselves deeply in the lives of characters, performing the same kinds of relational, introspective work that true method actors do.

So maybe method acting isn’t dead. Maybe it’s the perfect time for actors to reclaim it, particularly if Hollywood wants to reach audiences where they are. Bring back introspection and personal reflection for an era of small reflective screens, to stage a private confrontation: just you and the actor, alone in the dark.