The Literate Computer

New kinds of software have served as advisers on the wording of a portion of this article

BY BARBARA WALLRAFF

Optimism

AT COLORADO STATE UNIVERSITY, IN FORT COLLINS, about sixty miles north of Denver, the 2,200 freshmen enrolled in English-composition classes visit the Computer-Assisted Writing Center once or twice a week. They sit at the lab’s terminals, type drafts of writing assignments they were given in class, run them through a series of computer programs, and, a few minutes later, collect sheafs of printouts. ‘These contain the draft plus a dozen kinds of suggestions and analyses. The suggestions have to do with deleting, changing, or at any rate thinking about particular words, phrases, and punctuation marks that the student has used. The analyses consider the writing overall: Has the student used a lot of passive verbs? Abstractions? Very long sentences? Are the sentence patterns repetitive? What is the draft’s “readability grade” (a number equal to the years of schooling a reader could be expected to need to understand the writing, as determined by a standard formula)? What is the draft’s readability grade according to four slightly different formulas? The students take the printouts home, look them over, scribble notes and revisions on them, and then go back to the Computer-Assisted Writing Center to edit their work and make new printouts, which they turn in to their instructors.

Now that computers that process words and check spelling have ceased to be exotic, here come computers that process language—or, in the jargon, “natural language” (a term meant to distinguish languages like English and Urdu from languages like COBOL and C). Many naturallanguage-processing computer systems, even many kinds of them, exist today. Probably the most familiar kind responds to questions by answering them. The systems to be discussed in this article, though, respond by questioning the wording of the questions. That is, they represent progress beyond word-processing and spelling-checking toward checking grammar, usage, and writing style.

The system at Colorado State, named Writer’s Workbench, is actually not very new. Writer’s Workbench has been in use at the university since 1981; it was up and running by 1979 at AT&T Bell Laboratories, where the research scientists Lorinda Cherry and Nina Macdonald developed it. Compared with most computer-related things, which seem to go out of date as quickly as number-one songs do, Writer’s Workbench is venerable.

The Writer’s Workbench tradition at Colorado State began shortly after Professor Charles Smith, a medievalist in the school’s English department, who happens also to be interested in computers, heard about the system. At that time Writer’s Workbench was a bundle of twenty-three programs that technical writers at Bell Labs used. Smith called AT&T and struck a deal, whereupon he and his colleague Kate Kiefer began to adapt the programs to the different realities of freshman comp. Soon they were ready to open what was then known as the Computer Text Analysis Laboratory to a small group of freshmen who had agreed to take part in an experiment.

The purpose of the experiment, of course, was to find out whether the customized Writer’s Workbench would help students improve their composition skills. Colorado State’s English department has long since concluded that it does help students—and instructors. Some instructors like it because it saves them time. Others just plain like it, and a few of them have been enthusiastic enough to adapt versions of the system to new uses. Today students taking courses in English as a second language also visit the Computer-Assisted Writing Center regularly, and at a second computer lab, students in the business program develop their business-writing skills. More than sixty other universities and high schools around the country use Writer’s Workbench as well.

About two years ago AT&T came out with a new version of the system for technical writers, which is marketed to people rather than institutions. At the same time, it started calling the earlier version of the system the Collegiate Edition. The Technical version has some special features. For example, its users may choose to see the suggestions and analyses on screen, instead of in the form of printouts. Also, they may choose which programs to run—which kinds of help they want. Users of the Collegiate Edition get page after page of help, like it or not.

The Technical version consists of twenty-five programs to the Collegiate Edition’s forty, but oddly, it takes up nearly twice as much space in the computer’s memory. Both systems, though, take up too much memory to run on most personal computers. Also, Writer’s Workbench requires the UNIX operating system, which few PCs have. And so in recent years computer entrepreneurs have sought to fill the gap, with “style-analyzing” programs for PCs. Most of these have been directly or indirectly inspired by Writer’s Workbench—as the entrepreneurs are for the most part happy to acknowledge. The developer of Grammatik II (one of two “Editor’s Choice” selections among five style analyzers that were reviewed in PC Magazine in 1986) was a relatively enthusiastic former user of Writer’s Workbench. The developers of Punctuation & Style (the other “Editor’s Choice”) started with the Writer’s Workbench “source code” — the system’s userunfriendly core. They simplified it and translated it to run on popular PC operating systems and to work with popular word-processing programs.

AT THE IBM THOMAS J. Watson Research Center laboratory situated in the hamlet of Hawthorne, New York, some twenty miles north and a jog east of La Guardia Airport, the computer system Critique is evolving. The brainchild of George Heidorn and his research group—Karen Jensen, Stephen Richardson, Lisa Braden-Harder, Slava Katz, and Yael Ravin—Critique is like Writer’s Workbench in that it makes suggestions about writing and presents analyses of it, on printouts or on screen. It is unlike Writer’s Workbench in that it is not a product. Nor, according to official IBM sources, is it even a potential product. Critique is considered an effort of pure research in the field of natural-language processing. Notwithstanding, several hundred IBM employees whose jobs involve writing and editing have leave to use the system, and as an experiment IBM has recently made it available to the writing programs at Colorado State University and the University of Hawaii.

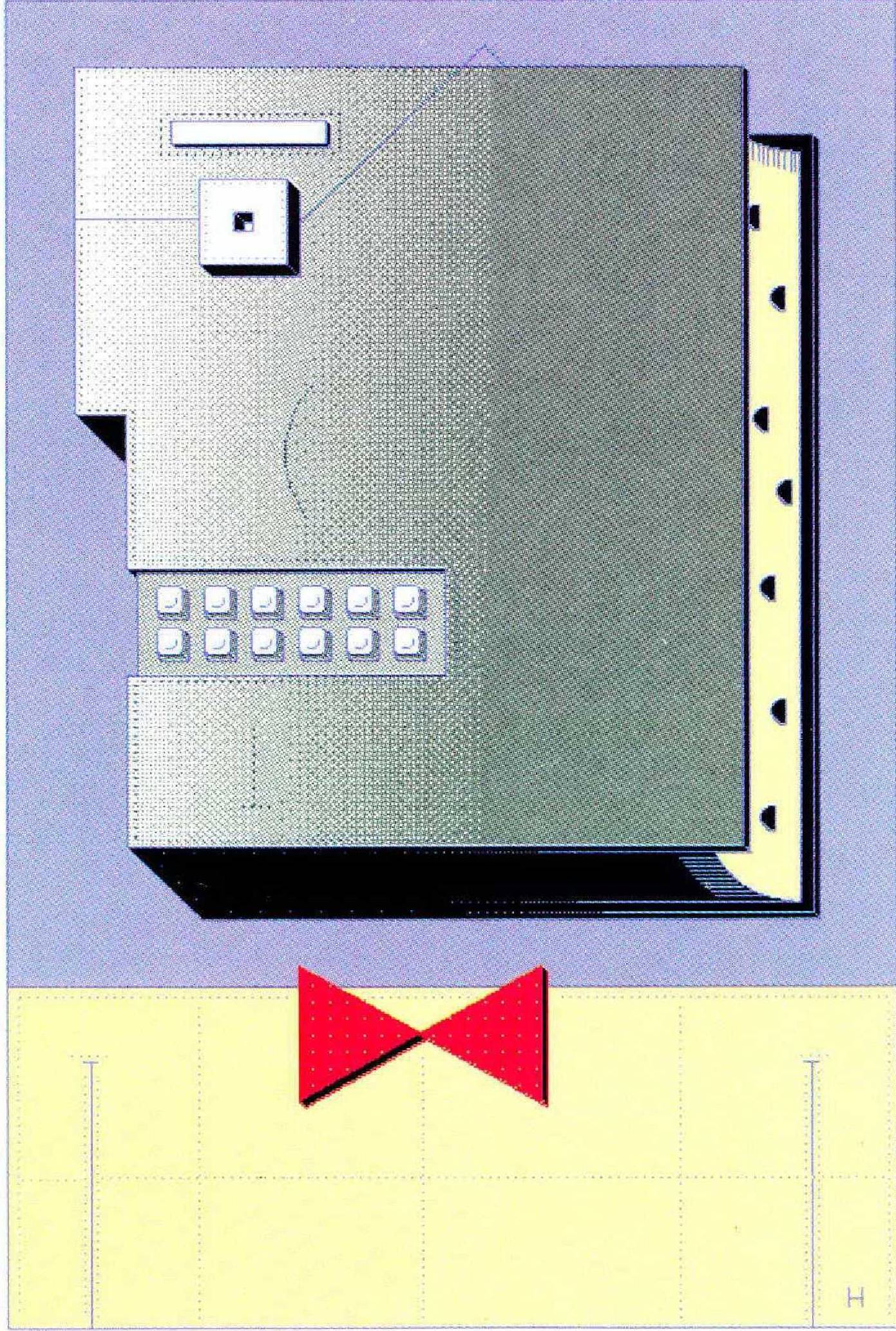

When a visitor wants to see what Critique can do, a member of the research group calls it up and then, generally, calls up a certain letter of recommendation, which has been specially mangled for demonstration purposes. The letter appears on the color screen—white type on a black background. A few keystroke commands turn Critique loose on it. Critique scans the letter a sentence at a time, at a rate of about five seconds per sentence. The first sentence reads: “I am writing in behalf of Susan Hayes, who’s application for employment you recently received.” When the system is done with that, it draws a red line under “who’s,” prints “whose” underneath, and goes on to the next sentence. It proceeds methodically to the end of the letter, underlining problem areas as it goes. Meanwhile the visitor, playing the role of a user, is free to respond to any red lines already on the screen—for instance, the one under “who’s.” If he doesn’t understand the problem immediately, or feels like pretending he doesn’t, he can press the SHOW key on the terminal. Beneath the red line a blue box will appear. It will contain a terse explanation— “CONFUSION OF ‘WHO’S’ AND ‘WHOSE’” — and beneath that, on a yellow background, the suggested correction, “whose.”

Not everything that Critique draws a red line under is an out-and-out grammatical error. Sometimes it makes judgment calls. For example, the second sentence of the letter of recommendation begins, “Because I have been her manager for more than three years,” and Critique underlines all of that (the sentence concludes, “I feel that I know her well enough to recommend her without reservation for your opening”). Press the key to see what’s the matter and the blue box will pop up with the message “SENTENCE TOO LONG/Consider turning the highlighted portion into an independent sentence./Omit the first highlighted word, and, if appropriate, use a conjunct (e.g., ‘however,’ ‘consequently’) at the beginning of the second sentence.”

Sometimes a user, having read certain of the messages that appear in the blue box, will experience an internal conflict. The user may, for example, suddenly feel that Critique is a self-important mechanical meddler, that his own judgment about sentence length is wholly adequate, and that if a person is unable to read with ease the sentence in question, then that person is of subnormal intelligence. The user is always free to disregard Critique’s advice and leave the sentence as is. Here, impelled by this feeling, he may be ready to do just that. Then again, he mav recognize that the system means no personal affront but rather was created—after considerable nontrivial research—to be a tireless helper and guide. He may feel humbled by the combined majesty of technology and wellintentioned, multicolored advice. He would like at least to be fair. He is ready to press the SHOW key again.

Now a whole screenful of explanatory prose appears. In this case, first comes praise for the clarity, precision, and utility of short sentences. Beneath that there’s a little chart showing the average length of the sentences used for technical instructions in manuals (fifteen words—the threshold at which Critique begins to object to too-long sentences, in any kind of prose), business letters (nineteen to twenty words), and academic papers and books (twentytwo to twenty-three words). Then the user is informed that “it is possible to reset the threshold value for sentence length. ...” The lesson concludes with a bit of information about the grammatical nature of the sentence in question and a paraphrase of the advice that was given in the blue box.

At this point the user does whatever he wants with the sentence and proceeds to the next red underline.

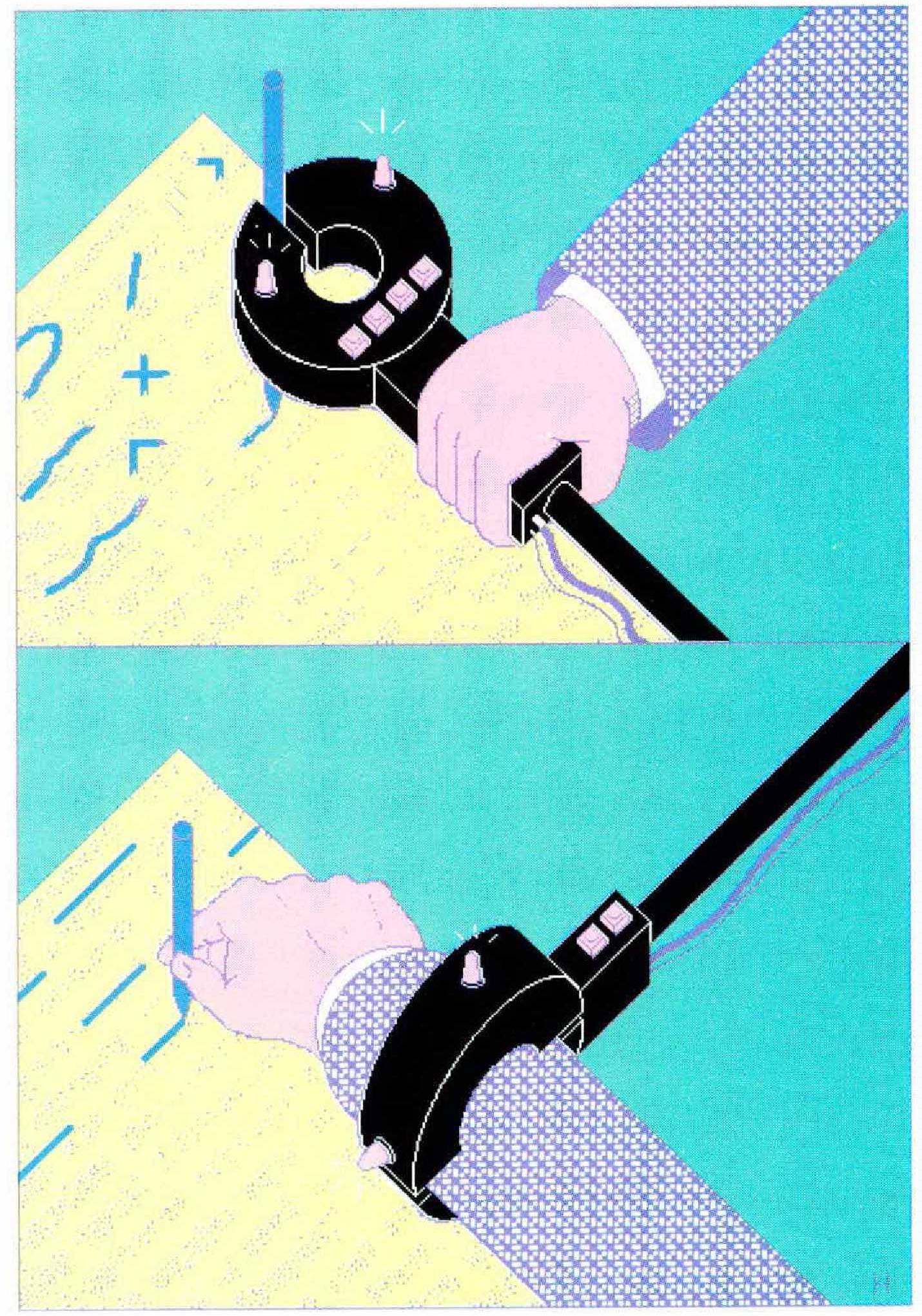

GILBERT SHARER DOESN’T SAY SO, BUT THERE MUST be moments when he envies the user who may do whatever he wants with a sentence. Sharer works in the technical-writing department of the Murray Ohio Manufacturing Company, which manufactures lawn mowers in Brentwood, Tennessee, ten miles south of the heart of Nashville. He, his colleagues, and their fellow technical writers at more than two dozen other organizations around the country use the Smart Expert Editor system, a.k.a. “Max.” For most of them‚ Max isn’t just an adviser—its word is law. Max is customized for each company that buys it. Companies typically buy it to work on owner’s and repair manuals. Typically, what Max doesn’t approve must be revised and then run through the system again—and then maybe revised and run through again. What it does approve is set into type. The text will have earned a readability grade of eight or less, meaning that a reader with an eighth-grade education should be able to understand it easily. It’s possible, as part of the customization process, to set this threshold lower—but not higher. The Smart Expert Editor was developed by John M. Smart, the president of Smart Communications, Inc., of New York City. “We are a cult,” Smart says cheerfully. “Either you love this thing or you hate it.”

Gilbert Sharer doesn’t hate Max. He sees its usefulness for lawn-mower manuals and says he’d like to see what it could do with income-tax forms. But neither does he love it without reservation. “You lose a lot of the meaning of the words,” he says. “For literary use, I wouldn’t like it.”

Each Max system uses a dictionary, typically containing 2,500 to 7,500 words, that Smart Communications has customized for the purpose at hand. The dictionary for the Murray Company’s system includes motor, handles, blades, and so forth. In that dictionary each of these words has exactly one meaning: a lawn mower has blades to cut grass with, and so the grass may not also have blades in Murray’s Max. (About half a dozen exceptions to this rule occur in the standard Max dictionary. Practicality dictates that the system allow light, for example, to refer not just to weight but also to color and to brightness.) T he dictionaries for all the systems, of course, include basics like prepositions, articles, conjunctions, numbers, and forms of verbs like be and have.

The 2,500 to 7,500 words in the dictionary are the only ones that the company’s technical writers will be allowed to use. (Linguistic researchers have demonstrated that an average person has about 45,000 words in his “reading-recognition” vocabulary; a typical desk-size dictionary contains more than 150,000 words, and Webster’s Third New International Dictionary 460,000.) The system will recognize other words, but only in order to object to them. It flags indefinite words and phrases (like things and a lot) and asks for definite “nomenclature” or measurements instead. It objects to contractions, acronyms, and “useless” words. It is also particular about how the words it does approve are used, objecting to long-windedness, passive verbs, long sentences, complicated sentences, complicated or incorrect punctuation, and more. In short‚ Max is a martinet—though it would object to the name.

The limitations that Max imposes on vocabulary and syntax have a special appeal for companies that do business abroad: Max’s output can be translated into other languages automatically. Companion programs exist for translating Max-approved English into five languages—six if you count separately the Brazilian and Portuguese versions of Portuguese. Other programs do partial translations into eight more languages, including Japanese, Chinese, Swedish, Turkish, and Thai, speeding along the work of the eventual human translator. But, according to John Smart, the “product-liability crisis” is responsible for most of his sales. “Companies get sued for all sorts of things because the instructions aren’t clear,” he says. The requirement that Max’s output have a readability grade of eight or less is one feature designed to forestall lawsuits. Also, at every mention of a dangerous machine part, such as a lawn mower’s blades, or anything else that has special potential for attracting litigation, Max sets the terminal screen flashing and blinking, and refers the writer to a rule book that comes with the system. There he is reminded to recommend caution—and to do it in simple words and in the active voice.

TYPE “JENNY WANTED TO GO PUNK BUT HER FATHER put his foot down” on the terminal for the Rina system, at the General Electric Research and Development Center, in Schenectady, New York, and you’ll get the response “He put his foot on the floor?” Respond, in turn, “No. He put his foot down,” and Rina (which is a “personal” name, not an acronym) will come back with “He tried to block her plan?”

Rina, which, like Critique, isn’t a product, is something of an alter ego for its Israeli-born creator, Uri Zernik, who began developing Rina at the Artificial Intelligence Laboratory at the University of California at Los Angeles. He says he responded in much the same way the first time he heard “put his foot down,” after moving to this country eight years ago. “My ultimate objective is to make a computer behave just like a person—to succeed where people succeed, to make the interpretations that people make, wrong or right.” The system’s first response, above, is the kind of wrong interpretation that pleases Zernik.

Actually, Rina would no longer respond in this way to sentences containing “put his foot down,” unless Zernik erased the phrase from its memory to see what would happen in new contexts, because “put his [or her] foot down” is one of about two hundred figures of speech that Rina has at least partly “acquired.” Zernik considers that Rina has acquired a new phrase only when it—like a conscientious foreign-born person learning idioms—can distinguish a correct usage from a syntactically or conceptually incorrect one. For example, you don’t put your foot down “on” or “to” or “from” or “against” someone, nor do you “put down your foot.” And Jenny could not put her foot down about something her father had in mind: it is necessary for the person doing the putting to be in a position of authority.

Rina’s ability to recognize figures of speech and derive their actual meanings is only by the way. Rina is primarily designed to search for a particular kind of meaning in words, and to ask for advice when it doesn’t find such meaning. Rina “tries to extract plans and goals from sentences,” Zernik says simply. Rina is concerned more with the meaning of text than with its grammar. The system associates whole clauses with “linguistic” and “situation” patterns, most of which are somehow relevant to the plans and goals of individuals. For example, “Jenny wanted to go punk but her father put his foot down” would match the linguistic pattern “Person 1 wanted [something or other] but Person 2 [did something or other].” In this pattern “wanted” is likely to be introducing a goal of Person l’s and “but” is a signal that Person 2 is probably going to interfere. The sentence would also match a situation pattern in which Person 2, Jenny’s father, has authority over Person 1—thereby suggesting a bit about the possible nature of his response. Because “put his foot down,” taken literally, is not a good match for any existing pattern for the reaction of an authority figure against a plan, Rina begins generating questions—or, actually, successive hypotheses about the meaning of the phrase, which are represented on screen by examples intended to test the hypotheses, followed by question marks. Thus “He tried to block her plan?”

Zernik likes to work with figurative phrases in part, he says, because many of them “encapsulate something about plans and goals,” and in part for what seem to be anthropomorphic reasons. He says that he, too, is still constantly acquiring new English figures of speech, a process that helps him program Rina to respond the way a person would. Also, Zernik likes the fact that the meaning of figurative phrases cannot be found in their individual words. “The learning task is not just to learn ‘he put his foot down,’” Zernik says. “This task is very easy. The task is to have the correct syntax and to extract the meaning of the phrase.”

A number of natural-language-processing researchers small enough to count on your fingers is responsible for developing the four computer systems I have by now sketched. The number of members of the Association for Computational Linguistics, an international professional organization in the field, is above 2,000, according to Donald Walker, a researcher for Bell Communications Research, who is a former president of the ACL and now its secretary-treasurer. Membership in the ACL has quadrupled over the past decade, an increase that Walker believes parallels the growth of the field as a whole.

This army of researchers has been developing natural-language-processing systems of many kinds, There are systems that can summarize text. There are systems that can summarize selectively, finding references to particular topics in, say, a month’s worth of newspapers and recording the gist of what is said about those topics. There are systems that can respond in plain English to questions typed in plain English. There are systems that can respond in spoken English to spoken English. There are systems that can translate into German or Japanese and back again.

And there are limitations, more or less severe, on the abilities of all these systems. None of them can accept as input all the kinds of English that a person could be expected to accept, or understand—including much that is sloppy or ungrammatical. Nor can any of them generate as output a wide range of reliably grammatical, idiomatic English. Those two steps, one right after the other, amount to something like the ability to solve writing problems. So progress toward a system for scanning all the medical journals and summarizing the developments in a given specialty, or one for answering the questions of naval officers about the condition of their vessel, or one that will give stock quotes over the phone, is also progress toward a system able to correct sloppy English. And progress toward a system able to catch lapses from grammar, idiom, and clarity has implications extending to the far reaches of society.

Pessimism

“TWENTIETH-CENTURY ELECTRONICS HAS AL-, ready turned some underlying ideas about what is the stamping ground of the mind and nothing else on it’s head. Before very recently, mathematical abstraction computing ability was one of the things that made us most unique, and that was a comfort. These days there is something useful, instead, that computers exist and their a lot better at computing than us.”

I wrote that paragraph to use as a test. Or, rather, I wrote a paragraph and then muddied it up in ways that, I imagined, would give Writer’s Workbench, Critique, Max, and Rina things to do and would demonstrate their individual strengths and weaknesses. Anyone can see that the paragraph needs help. An alert grammar school student would be able to solve some of the problems, though it would take an empathic professional editor—or maybe a mind reader—to cope with others. If those hypothetical persons represent points on a scale of editing ability, where are the computers on that scale? Which errors and infelicities can they catch, and why do they fail to catch others?

AT&T’s Writer’s Workbench, Technical version, churned out thirteen pages of suggestions for and analyses of the paragraph. The output was presented under headings that indicated which of the system’s twenty-five programs was reporting in. That is, the spelling checker made its report under the heading “Spelling,” the punctuation checker’s report came under “Punctuation,” and so on. Those two programs and a “grammar” checker (which, as the text under the “Grammar” heading notes, looks only for split infinitives and incorrect articles) didn’t find any errors.

The program responsible for “Word Choice” suggested omitting the “very” in “Before very recently” and replacing “one of the” with “a” or “one” alone, and “most unique” with “unique” alone. If I’d made those changes and run the text through again, the program would have advised me that unique tends to be misused and overused and that I might be better off with unusual or rare.

I flipped through to the analyses. At the top of the first page appeared a caveat, in capital letters: “BECAUSE YOUR TEXT IS SHORT (<2000 WORDS & <100 SENTENCES), THE FOLLOWING ANALYSIS MAY BE MISLEADING” — reasonable enough, as far as it goes. Below that, and on subsequent pages, I read: “The Kincaid readability formula predicts that your text can be read by someone with 13 or more years of schooling, which is rather high. ... In this text 33% of the sentences are complex and 0% are simple. [The other 67 percent were counted as compound or compound-complex sentences.] These percentages should be closer together. . . . You have appropriately limited your use of passives and nominalizations. ...” And on and on for ten pages.

I was fascinated by all this. But ultimately the sheaf of analyses began to look like a crowd cheering a naked emperor as he parades down the boulevard. Writer’s Workbench, though, doesn’t know that story. In fact, it hasn’t a clue about what any text means. Its analyses are done on the basis of syntax, or sentence structure, and take no account of semantics, or the meanings of the words that make up the structure.

Why didn’t any of the programs object to—at least—the “it’s” for “its,” in “on it’s head,” and the “their” for “they’re” in “their a lot better at computing than us”? (Among the further problems I’m disregarding here is that the “it’s” really should be a “their,” because the antecedent is “ideas”: “turned some underlying ideas ... on its head.” Several singular nouns come between the pronoun and its true, plural antecedent, and so, clearly, a system that doesn’t take meaning into account will be hard pressed to resolve this agreement problem.) The punctuation and spelling checkers didn’t object, because each of these would be a legitimate word in other contexts.

The most powerful component of Writer’s Workbench is a program, named Parts, that classifies the words in the text according to their parts of speech. A user can see the direct result of this program’s work on a printout of the text annotated with the part of speech that the program judges each word to be. Parts is 95 percent accurate on text at elementary-school readability levels and 90 percent accurate on text written by college freshmen. But it’s the indirect results that really matter. The output from Parts is the input for the other analysis programs, which use it to determine what proportion of a given text’s verbs are passive, infinitive, or forms of “to be”; what proportion of the sentences are simple, complex, compound, and compoundcomplex; and so on. (None of the style-analyzing programs designed specifically for personal computers has a feature comparable to Parts, and so none can do the elaborate analyses that Parts makes possible.)

Parts, though, never objects to anything. All it does is classify the words that are in the text. This is not an easy job. Many words in English can function as any of several parts of speech (that, for a particularly devilish example, can be an adjective, a relative pronoun, an adverb, or a conjunction). For all such words the program, having no information about meaning, must “guess” the part of speech on the basis of what parts of speech the other words in the sentence are. The other words, of course, may also be ones that function as any of several parts of speech.

In a way, Parts did pass judgment on the incorrect “it’s,” in “on it’s head”: it failed to select the sequence of a preposition, a pronoun, a form of the verb be, and a noun as the likeliest one for the phrase. It chose instead to interpret “head” as an adjective (as in “head coach”), making the phrase “on it’s head” grammatically equivalent to the “on” phrase in “Keep your mind on what’s important.”

BUT IT WAS MOSTLY for Critique’s sake that I misused “it’s” and “their” in the “Twentieth-century electronics” paragraph, made the “it’s” disagree in number with its putative antecedent “ideas,” and put an “us” where a “we” ought to be. IBM’s Critique doesn’t just determine parts of speech; it goes on to “parse,” or diagram, the sentences. This is quite a significant advance. It allows Critique (and other natural-language-processing systems with parsers, of which there are now quite a few) to entertain, as it were, suspicions about words and word relationships that Writer’s Workbench must take on faith. Indeed, Critique did flag the “it’s.” It also flagged the “most unique.”And that was all it had to say in the way of line-by-line commentary, though it did produce a one-page analysis and a set of not entirely accurate sentence diagrams.

Critique had the potential to catch the misused “their” and the “us” that should have been “we.” However, Critique being a work in progress, the research group hadn’t written those rules yet, and there was little urgency for the group to write them, since no plans exist to turn Critique into a marketable product. Rather than writing new rules similar to ones already in the system (for instance, the rule for when to flag who’s and whose), George Heidorn told me, “we concentrate on solving interesting research problems.”

Parsing sentences has been an extremely interesting research problem. Critique fails to generate a sentence diagram, or parse, about 20 percent of the time. Much of the rest of the time, the system generates multiple parses for a single sentence. Here the tricky part is picking out the right one.

Critique, like Writer’s Workbench, does its work on the basis of syntax, making inferences from the way the parts of speech in a sentence fit together—or the way what might be the parts of speech fit together. The syntax of even a sentence as simple as “Time flies like an arrow” permits several possible interpretations by a machine that lacks information about meaning. Maybe “time” is the verb and the sentence is a command. And then, “like an arrow” (in informal usage) might modify either the noun or the verb—whichever is which. Or maybe “like” is the verb, with “flies” as its subject and “arrow” as its object, and “time” a nounadjective.

A technical report presented in 1982 by William Martin, Kenneth Church, and Ramesh Patil, all then at the Massachusetts Institute of Technology, explored the scope of the multiple-parse problem. (In doing so, they used a syntactic parser different from the one that is built into Critique.) The researchers reported that when they ran about 500 sentences of ordinary business English through their parser, eight of them resulted in more than 300 parses apiece. The record was a jaw-dropping 958 possible parses, for the sentence “In as much [sic] as allocating costs is a tough job I would like to have the total costs related to each product.”

I haven’t seen what Critique makes of that sentence, but I did see it diagram “Test results show that sand filters produce more even results”—also a doozy, since there isn’t a single word in it that is invariably one part of speech. When a sentence has multiple parses, Critique notes that fact on screen and presents a diagram of the parse that is its best guess, according to the rules that it follows. For this sentence its best guess was that the main verb was “test” and that the sentence was a command. Karen Jensen manually overrode that, informed the system that “show” was the main verb, and asked for the parse that was likeliest in light of that. The diagram it came back with had “filters” and “results” as additional verbs and “produce” as a noun.

Somewhere around “mathematical abstraction computing ability” Gilbert Sharer paused in typing the “Twentieth-century electronics” paragraph on his terminal to warn me, “Max will tear this to pieces.” A moment later Max proceeded to do just that. The printout announced that the system had found a total of twenty-four errors in the paragraph’s sixty-eight words, for an error rate of 35 percent. (The highest total word count I can arrive at, by counting “Twentieth-century” and “it’s” as two words apiece, is sixty-six—but that’s a side issue.)

“Twentieth-century” started the paragraph off on the wrong foot: “USE FIGURES SEE-33,” the printout commanded. Sharer translated: section 33 of the rule book that comes with Max presents rules for when to spell out numbers and when not to. In “some underlying ideas about what is the stamping ground of the mind,” Max branded “underlying” and “what is” both “AWKWARD CONSTRUCTION SEE-5.” And then Max considered “stamping” (in “the stamping ground of the mind”) to be an unacceptable substitute for “marking,” and “computing” (in “mathematical abstraction computing ability” and in “their a lot better at computing”) and “computers” (in “computers exist”) to be unacceptable substitutes for “calculating” and “calculators.” Max objected to “recently” (in “Before very recently”) and “a lot” (in “their a lot better”) on the grounds that each should be replaced by a measurement, “things” (in “mathematical abstraction computing ability was one of the things that made us most unique”) on the grounds that it should be replaced by proper nomenclature, and “useful” (in “these days there is something useful, instead”) on the grounds that it is useless. There were other error messages, the most common of which was “NOT IN DICTIONARY.” Words that aren’t in the dictionary, of course, are not allowed. Two of the words that triggered this response were “ideas" and “mind.”

It’s one thing for Jenny’s father to put his foot down, Uri Zernik explained; it’s quite another for twentieth-century electronics to turn underlying ideas on its, or their, or any, head. He declined even to present the test paragraph to Rina. “The program would not understand it at all,” he told me. For one thing, “Rina would say, ‘Please tell me what “Twentieth-century” means. Please tell me what “electronics” means.’ It’s not just words you have to put into this computer: What does ‘electronics’ mean?” Explaining “electronics” to Rina would involve finding the appropriate place for it in the system’s diagrams of conceptual representations and marking out the relationships between the new concept, electronics, and concepts already present in the diagrams, such as television and computers and signals—provided those were present, and they are not. Rina would have other problems, too, with the “Twentieth-century electronics” paragraph. There are no people in it, and it is only tangentially related to plans and goals. To Zernik, this was almost a philosophical failing. Certainly, the language that Rina is designed to respond to is about people—or characters, like Jenny and her father—and their goals.

Zernik did, though, home right in on the figure of speech “turned ... on its head.” The phrase was evidently new to him, and he asked me to use it in other sentences. Eventually we agreed that it had to do with the upsetting of predictions—whereupon Zernik pointed out that predictions bear some relation to goals. But after we had discussed the phrase further, he said, clearly disappointed, “Maybe it’s not plans and goals but beliefs here.”

Until they acquire a complex internal representation of meaning—in the jargon, this is called a knowledge base or knowledge representation—computer systems are going to miss not only the plans and goals of characters but also many common, and seemingly simple, writing problems. Whether or not the adjectives that precede a noun should have commas between them depends on the adjectives’ meaning (“the big, expensive computer and the big white computer”), and sometimes on context (“Put the big expensive computer next to the small expensive computer”). Whether a particular relative clause should begin with a comma and which, no comma and that, or (the rare “exceptional” pattern) no comma and which depends on meaning. Dozens—probably hundreds—of similar meaning-related problems lurk at the line-by-line level of English. And of course meaning is essential to larger writing considerations, such as whether a given sentence, paragraph, or document is well organized and makes sense. To be effective writing advisers at any level, then, computers must compute not just structure but also meaning.

Researchers—Uri Zernik, for example—are working on it. The great problem they face is that words are able to carry so many different kinds of meaning. The sentence “Mr. Smith visited a restaurant” implies food and waiters and a check and all kinds of things that are not present in the actual words but that a human reader will know to take for granted in the subsequent text. Also, a human reader will know which of the usual implications to disregard if the text specifies that Mr. Smith went into the restaurant to ask for the time or that Mr. Smith is an inspector for the board of health. Quite complicated sequences can be implied in quite simple sentences. A favorite plans-andgoals-related example of Uri Zernik’s: “Wilma was hungry. She opened the Michelin guide.”

People seem to be able to take in explicit and implicit meanings more or less simultaneously. But no one knows exactly how we do it. No one can even say exactly what we’re doing: a satisfactory list or model of everything that a person might be expected to know has yet to be created. “Knowledge is not just a data base,” says Mark Underwood, the manager of product development at Computer Cognition, a San Diego company that is working to create a natural-language-processing system modeled on human cognition. Underwood observes, wryly, that although today’s computer systems are barely able to differentiate between it’s and its or other homonyms, “people who can do that right out of school come pretty cheap.” He adds, “We tend to forget how gifted the average person is in terms of knowledge representation.”

Perspective

TODAY’S NATURAL-LANGUAGE-PROCESSING SYSTEMS can do marvelous things: summarize, answer questions, translate. As a rule, though, a given system will be able to recognize, and respond to, the explicit meaning of very literal and particular kinds of text or else very particular kinds of meanings in more varied text. That is, the most complex of today’s systems have depth or breadth but not both.

In recent months I have asked quite a few people associated with natural-language processing how long they thought it would be before computers could really edit text—that is, could vet all kinds of text as well as an empathic professional editor can. Their answers ranged from “the end of the millennium” to “maybe never.” Terry Winograd, an associate professor of computer science and linguistics at Stanford University and, at age forty-one, one of the grand old men of natural-language processing, told me flatly, “It’s not in sight.” He added, “I’m not saying it will never happen, but it’s not something that can be done by improving and tuning up existing systems.” What the problem boils down to, as he sees it, is that editing is a process of making interpretations and decisions, and “that’s not something that computers are good at.”

Electronics has already begun revising our language. In making it possible for people, businesses, and governments to zip spoken and written words around the world, electronics has made it necessary for them to do so. Today when we demand information or action quickly, we mean within seconds, or perhaps hours—not days or weeks from now. The most important consequence for the world, probably, is that people who dispense information or make decisions—spokesmen, journalists, diplomats, executives—have proportionally less time to think. The important consequence for the language is that they have less time to decide how to express their thoughts. We are more promptly but less judiciously informed.

In making it possible for us to zip pictures around the world, electronics has made that necessary too, and has lightened the burden of description that words carry—or, from another point of view, usurped the craft and the art of description. At the same time, techno-talk, the metalanguage of machines, has infiltrated the rank and file of English. We have new words, like microwave and televise and modem. We have old words in new senses and new combinations, like remote and terminal and user-friendly. We have some new coinages in still newer forms, like ATM, TV, CD, VCR, VDT. We have new metaphors and analogies: new verbal inputs and outputs, a rewiring of our linguistic circuits. Only Luddites will insist that such change must be stopped—or will imagine that it can be.

Twentieth-century electronics has—as Writer’s Workbench, Critique, Max, and Rina have all failed to help me say—already overturned certain comfortable assumptions about where the unique provinces of the human mind begin and end. Until well into this century the ability to manipulate mathematical abstractions set us apart from every other creature and thing on the planet, and that was comforting. Today there are computers, far abler at computing than we, and this is useful.

Before it becomes possible for computers to use the kind of English that we use, we will need to get to know our language, and our mental processes, much better than we know them now. And as we learn how to delegate to machines those human tasks that turn out not to be beyond their grasp after all‚ we can hardly fail to gain new respect for the ultimate complexity of language and thought. Paradoxically, language and thought have allowed us to plumb countless worlds—real, possible, and wholly imaginary—but we are still far from fathoming language and thought themselves. □